When 8 bits is not enough for lightmaps

[back]

The problem: lightmaps typically cover many pixels on screen and...

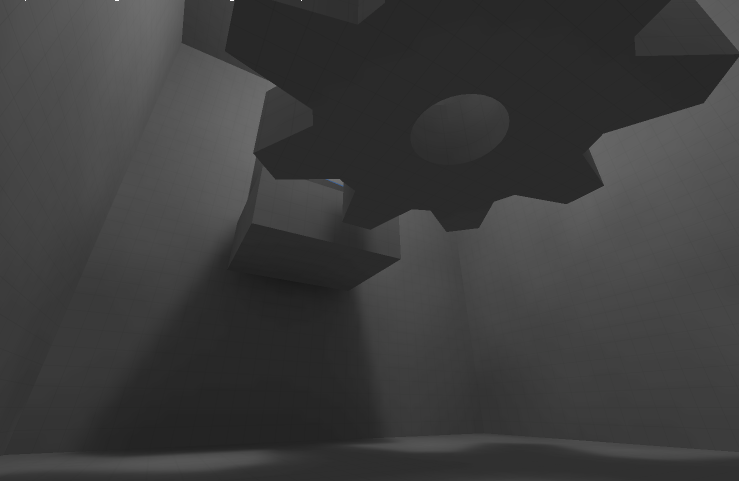

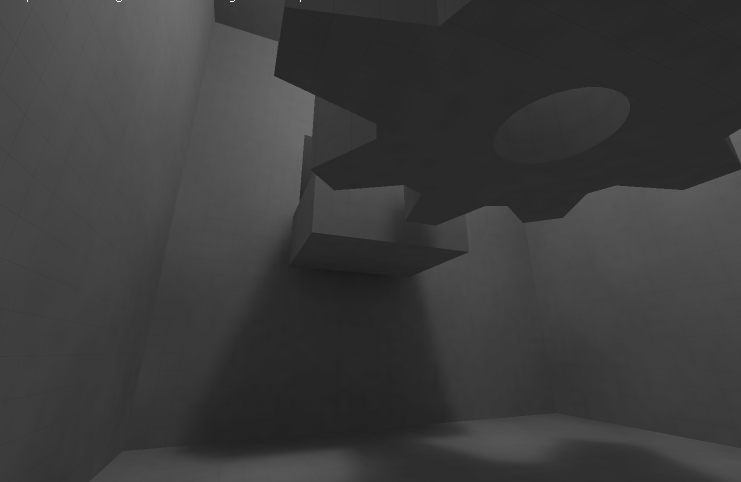

rounding shows ugly banding:

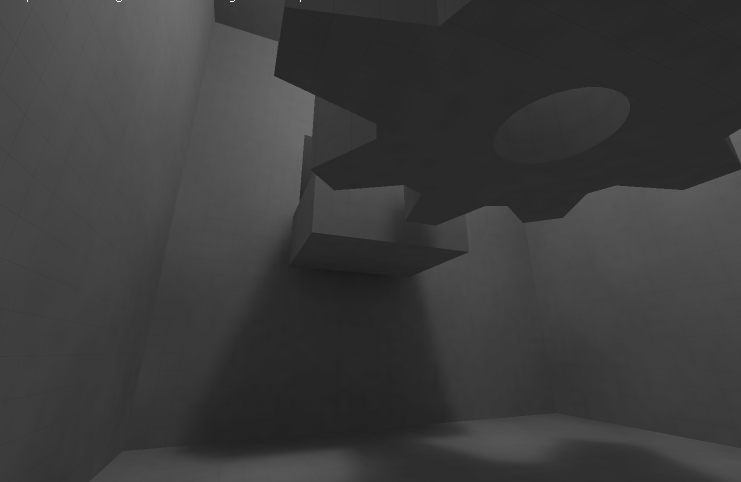

dithering lightmaps shows visible noise:

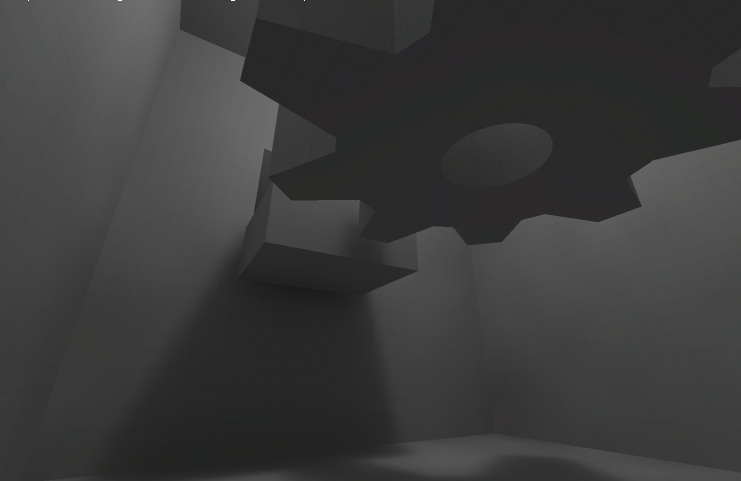

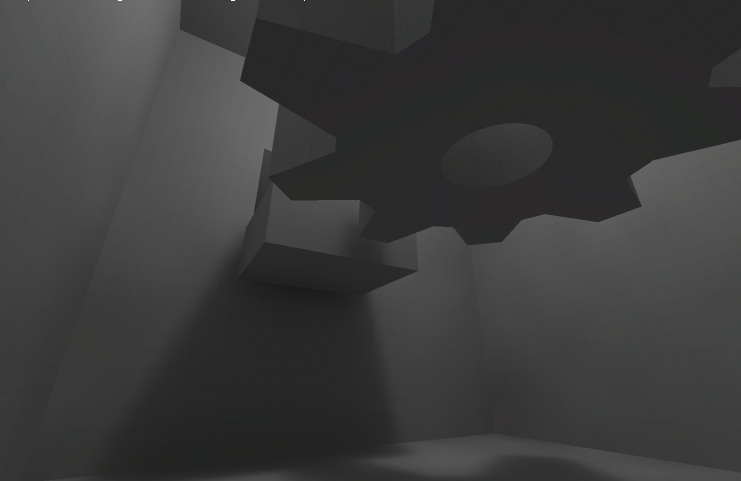

my solution: rgb palette (1-bit per channel table) has minor hue artifacts in dark areas but banding completely disappears:

How it works

-

It's actually quite simple, the lightmapper generates 32-bit floats so I have enough information.

Lightmaps are stored in gamma space to handle dark areas better (=pow(color, 1/2.2))

Instead of rounding final texels, I truncate them first and take average fraction for each channel:

obviously frac = value - trunc(value)

then I simply add gbrTable[intFrac = trunc(avgfrac*tableSize)] to final color, assuming already truncated and in 0..255 range

The table is actually straightforward to generate, in fact it can be extracted directly from quantized avg fraction:

(assuming table size is 8)

blue += intFrac & 1;

red += (intFrac & 2) >> 1;

green += (intFrac & 4) >> 2;

Channel ordering (b,r,g) is deliberate and correlates with perceptive luminance (blue 11%, red 30%, green 59%)

We effectively enhance the 8-bit precision by utilizing individual channels to store quantized fraction ("=error after truncation")

as 1-bit additive rgb color.

Maybe this is a known technique and it may even have a name, but "reinventing the WHEEL" can be fun.